Introduction

Back in June of 2022 I covered the top operator in my Fun With KQL – Top post. We showed how to create your own top 10 lists, for example what were the top 5 computers ranked by free disk space.

What if you needed your top results in a nested hierarchy? For example, you wanted to know which three objects in the Perf table had the most entries? But, for each one of those, what were the three counters with the most entires?

That’s where the top-nested operator comes in. It allows you to create top lists in nested, also called hierarchical levels.

Before we begin our discussion on top-nested, you should be aware that the samples in this post will be run inside the LogAnalytics demo site found at https://aka.ms/LADemo. This demo site has been provided by Microsoft and can be used to learn the Kusto Query Language at no cost to you.

If you’ve not read my introductory post in this series, I’d advise you to do so now. It describes the user interface in detail. You’ll find it at https://arcanecode.com/2022/04/11/fun-with-kql-the-kusto-query-language/.

Note that my output may not look exactly like yours when you run the sample queries for several reasons. First, Microsoft only keeps a few days of demo data, which are constantly updated, so the dates and sample data won’t match the screen shots.

Second, I’ll be using the column tool (discussed in the introductory post) to limit the output to just the columns needed to demonstrate the query. Finally, Microsoft may make changes to both the user interface and the data structures between the time I write this and when you read it.

Top-Nested Basics

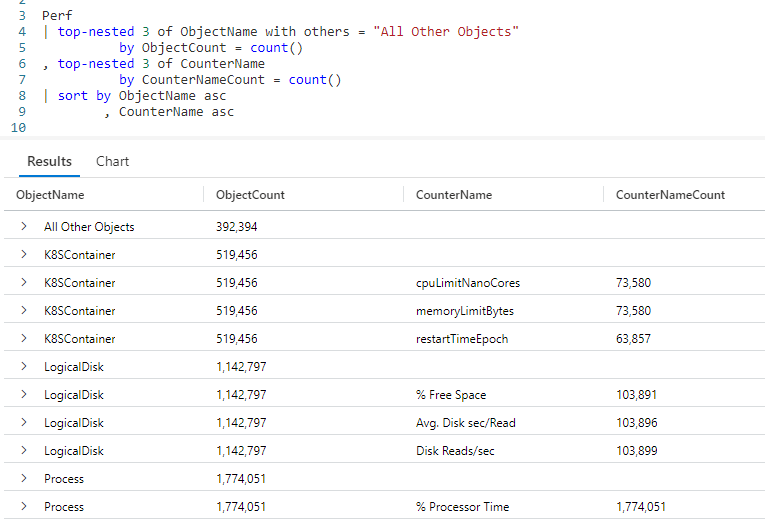

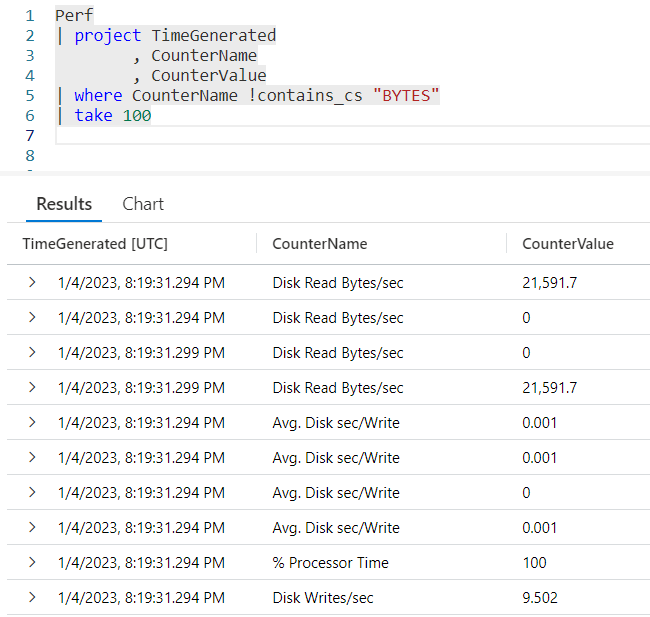

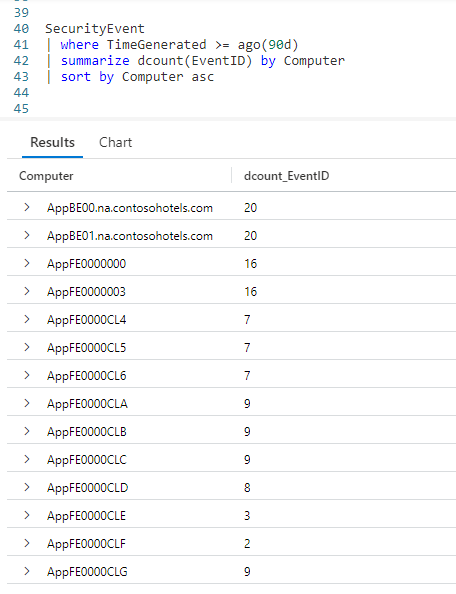

In the example below, we call the Perf table, and pipe it into our first top-nested operator. We then tell it how many items we want, in this case 3. Next, we tell it what we want three of, here we say we want the top 3 from our ObjectName column.

Next, after the by we indicate how we want to determine which ObjectName‘s are the top 3. Here we use the count aggregation, and storing that result in a new ObjectCount column.

OK, we’ve now setup the uppermost level for our top hierarchy. Now we need to tell the query what should be in the nested level. To do that, we use a comma, then a second top-nested operator.

Into the second top-nested we again say we want 3 items, this time from the CounterName column. Again we’ll rank these by the count aggregation, storing the result in the CounterNameCount column.

Finally we sort the results by the counts in descending order.

In the results, the first item was the Process object name, with 2,262,619 entries. It only had one CounterName associated with it, % Processor Time, which is why you only see one row for the Process object.

In second place is the ObjectName of LogicalDisk, with 1,286,540 entries. It had three counters associated with it. Of these, the Disk Read Bytes/sec took top place with 116,968 rows. In close second was Disk Bytes/sec with 116,965 entires in the Perf table. In third place for LogicalDisk was Disk Writes/sec.

The ObjectName that came in third was the K8SContainer, and you can see the three CounterName values associated with it.

Now that you’ve seen it in action, the top-nested operator is pretty simple to use and understand. Just tell it how many items you want, what item you want, and what aggregation you want to use to rank them.

Multiple Levels for Top-Nested

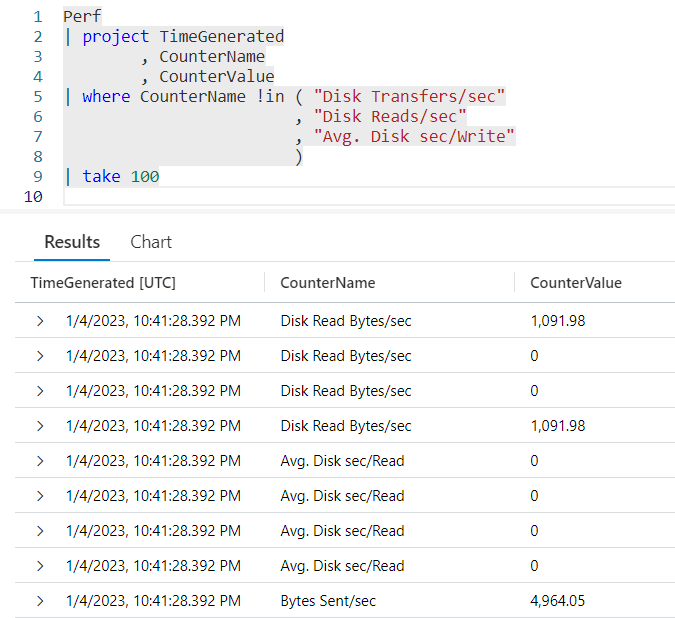

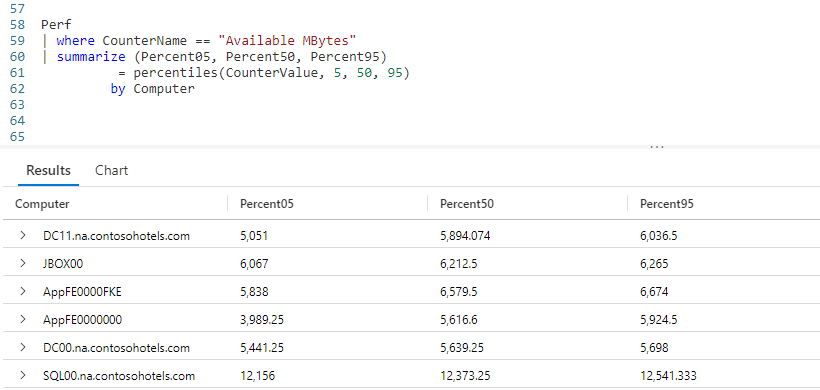

You can have many nested levels, in this next example we’ll use three levels of nesting.

Here we decided to get the top 5 of each level, and we went three levels deep, ObjectName, CounterName, then InstanceName. We could have gone even deeper, we just need additional top-nested operators for each level of our hierarchy.

Also note we decided to sort by the names of our various objects instead of the counter totals. This is a design decision that can be made by the ultimate end user of your query, and will be dependant on their needs.

Additionally, while I used 5 at every level, this isn’t a requirement. I could have used top-nested 3 at the ObjectName level, then top-nested 5 at the CounterName level, and perhaps top-nested 10 at the InstanceName level. Again, this can be determined by the needs of your end user, and Kusto is flexible enough to handle those needs.

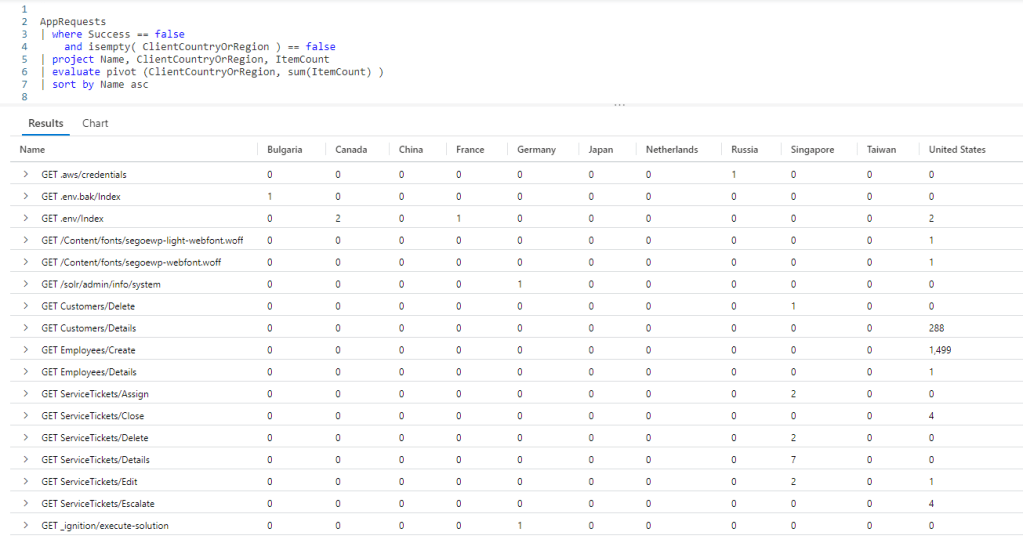

Using Other Aggregations

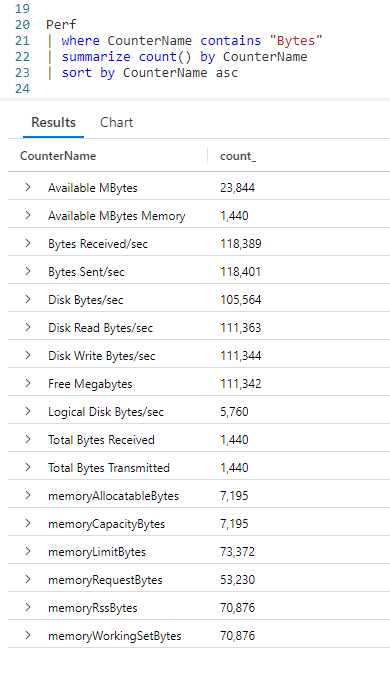

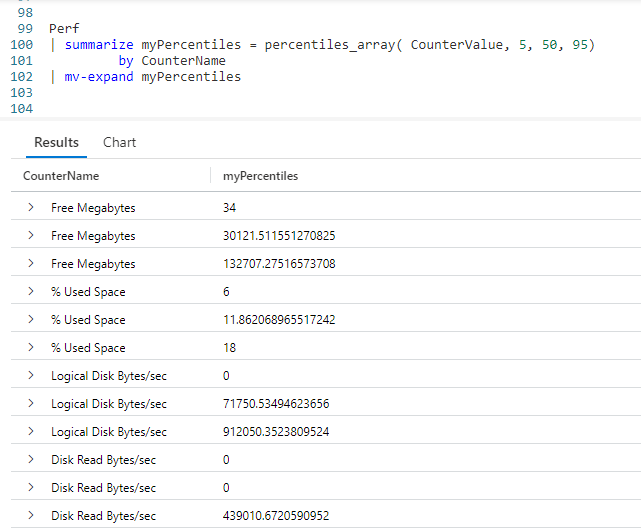

So far we’ve been using the count aggregation for our needs. We can use any aggregation type we need to with top-nested. Take a look at this example.

Here, we used the sum aggregation, summing up our CounterValue column in order to determine the rank within our top-nested hierarchy.

We could have used other aggregations, such as min or max (both of which we’ll see in the next blog post), or any of the many aggregations available in Kusto.

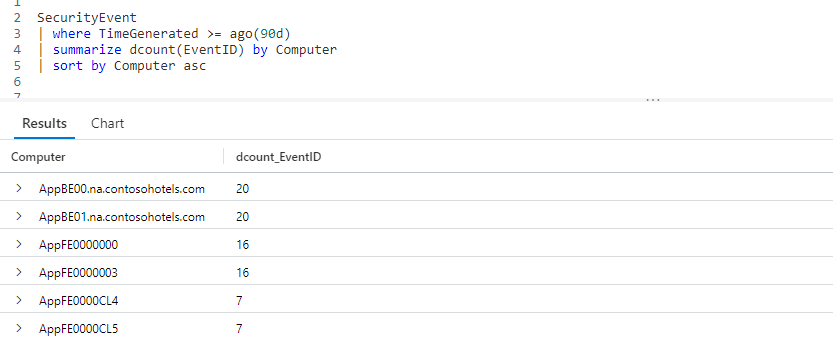

All Others

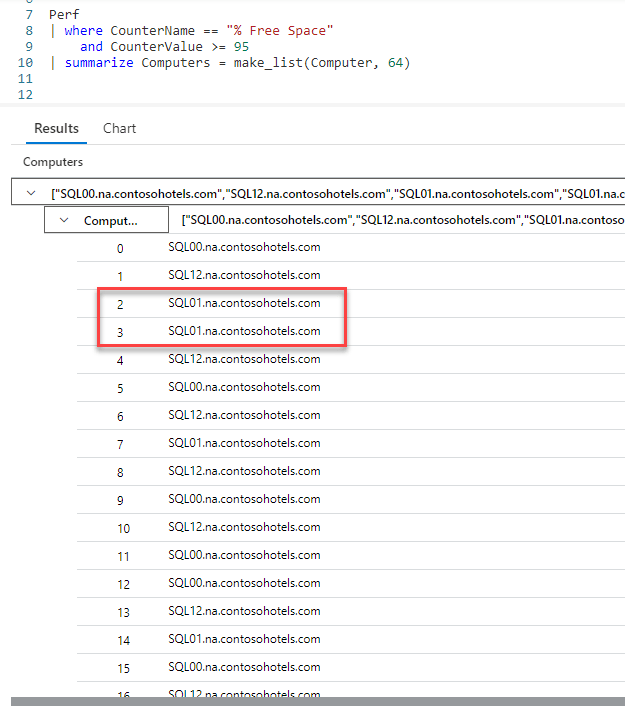

Sometimes it’s just as important to know what wasn’t included in your top list. The top-nested operator gives us this capability through the with others capability.

In this example, on the very top of the hierarchy you can see we’ve added with others = "All Other Objects" between the column to rank and the by. It is the with others that tells top-nested to aggregate the values not included in the final top list and display those results as well.

In the output, you see a row for "All Other Objects", this is the count for all the objects that were not in the top list.

The text All Other Objects was of my own choosing. I could have used any text here, like Not in the top list, Stuff not on top or Better luck next time.

Note that when determining the value for other it used the same aggregation function as the top-nested. Here we used count, but it could have been sum or whichever aggregation function we used.

Others at All Levels

In the previous example we only included others at the top level. We can use it at all levels if we wish. We’ll harken back to our original example with two top-nested levels, and include a others for each level.

In the second row, you can see K8SContainer, and All Other Counters in a sublevel, followed the the top 3 values for the CounterNames in the K8SContainer.

All Others at Sublevels Only

As you’ve seen many times in this Fun With KQL series, the Kusto Query Language is very flexible. This allows us a way to have others appear only at the sublevels, as you can see in this demonstration.

Here we only included with others at the second level of our nest. Do note this resulted in an extra row where the ObjectName is empty.

See Also

The following operators, functions, and/or plugins were used or mentioned in this article’s demos. You can learn more about them in some of my previous posts, linked below.

Conclusion

Getting a hierarchy of top items is a common business need. As you saw in this post, top-nested allows you to accomplish this easily. It also includes the ability to include the other values not included in the top-nested list.

The demos in this series of blog posts were inspired by my Pluralsight courses on the Kusto Query Language, part of their Kusto Learning Path.

The first course in the series is Kusto Query Language: Getting Started. The second course is Kusto Query Language: Beginning Operators. The third course, to be published soon, is Kusto Query Language: Basic Scalar Operators

I have two previous Kusto courses on Pluralsight as well. Kusto Query Language (KQL) from Scratch and Introduction to the Azure Data Migration Service, and these are two of the many courses I have on Pluralsight. All of my courses are linked on my About Me page.

If you don’t have a Pluralsight subscription, just go to my list of courses on Pluralsight . At the top is a Try For Free button you can use to get a free 10 day subscription to Pluralsight, with which you can watch my courses, or any other course on the site.